Decision field theory

Decision field theory (DFT) is a dynamic-cognitive approach to human decision making. It is a cognitive model that describes how people actually make decisions rather than a rational or normative theory that prescribes what people should or ought to do. It is also a dynamic model of decision making rather than a static model, because it describes how a person's preferences evolve across time until a decision is reached rather than assuming a fixed state of preference. The preference evolution process is mathematically represented as a stochastic process called a diffusion process. It is used to predict how humans make decisions under uncertainty, how decisions change under time pressure, and how choice context changes preferences. This model can be used to predict not only the choices that are made but also decision or response times.

The paper "Decision Field Theory" was published by Jerome R. Busemeyer and James T. Townsend in 1993.[1][2][3][4] The DFT has been shown to account for many puzzling findings regarding human choice behavior including violations of stochastic dominance, violations of strong stochastic transitivity,[5][6][7] violations of independence between alternatives, serial position effects on preference, speed accuracy tradeoff effects, inverse relation between probability and decision time, changes in decisions under time pressure, as well as preference reversals between choices and prices. The DFT also offers a bridge to neuroscience.[8] Recently, the authors of decision field theory also have begun exploring a new theoretical direction called Quantum Cognition.

Introduction

The name decision field theory was chosen to reflect the fact that the inspiration for this theory comes from an earlier approach – avoidance conflict model contained in Kurt Lewin's general psychological theory, which he called field theory. DFT is a member of a general class of sequential sampling models that are commonly used in a variety of fields in cognition.[9][10][11][12][13][14][15]

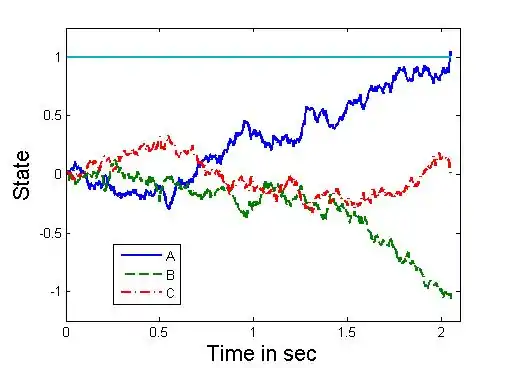

The basic ideas underlying the decision process for sequential sampling models is illustrated in Figure 1 below. Suppose the decision maker is initially presented with a choice between three risky prospects, A, B, C, at time t = 0. The horizontal axis on the figure represents deliberation time (in seconds), and the vertical axis represents preference strength. Each trajectory in the figure represents the preference state for one of the risky prospects at each moment in time.[4]

Intuitively, at each moment in time, the decision maker thinks about various payoffs of each prospect, which produces an affective reaction, or valence, to each prospect. These valences are integrated across time to produce the preference state at each moment. In this example, during the early stages of processing (between 200 and 300 ms), attention is focused on advantages favoring prospect C, but later (after 600 ms) attention is shifted toward advantages favoring prospect A. The stopping rule for this process is controlled by a threshold (which is set equal to 1.0 in this example): the first prospect to reach the top threshold is accepted, which in this case is prospect A after about two seconds. Choice probability is determined by the first option to win the race and cross the upper threshold, and decision time is equal to the deliberation time required by one of the prospects to reach this threshold.[4]

The threshold is an important parameter for controlling speed–accuracy tradeoffs. If the threshold is set to a lower value (about .30) in Figure 1, then prospect C would be chosen instead of prospect A (and done so earlier). Thus decisions can reverse under time pressure.[16] High thresholds require a strong preference state to be reached, which allows more information about the prospects to be sampled, prolonging the deliberation process, and increasing accuracy. Low thresholds allow a weak preference state to determine the decision, which cuts off sampling information about the prospects, shortening the deliberation process, and decreasing accuracy. Under high time pressure, decision makers must choose a low threshold; but under low time pressure, a higher threshold can be used to increase accuracy. Very careful and deliberative decision makers tend to use a high threshold, and impulsive and careless decision makers use a low threshold.[4] To provide a bit more formal description of the theory, assume that the decision maker has a choice among three actions, and also suppose for simplicity that there are only four possible final outcomes. Thus each action is defined by a probability distribution across these four outcomes. The affective values produced by each payoff are represented by the values mj. At any moment in time, the decision maker anticipates the payoff of each action, which produces a momentary evaluation, Ui(t), for action i. This momentary evaluation is an attention-weighted average of the affective evaluation of each payoff: Ui(t) = Σ Wij(t)mj. The attention weight at time t, Wij(t), for payoff j offered by action i, is assumed to fluctuate according to a stationary stochastic process. This reflects the idea that attention is shifting from moment to moment, causing changes in the anticipated payoff of each action across time. The momentary evaluation of each action is compared with other actions to form a valence for each action at each moment, vi(t) = Ui(t) – U.(t), where U.(t) equals the average across all the momentary actions. The valence represents the momentary advantage or disadvantage of each action. The total valence balances out to zero so that all the options cannot become attractive simultaneously. Finally, the valences are the inputs to a dynamic system that integrates the valences over time to generate the output preference states. The output preference state for action i at time t is symbolized as Pi(t). The dynamic system is described by the following linear stochastic difference equation for a small time step h in the deliberation process: Pi(t+h) = Σ sijPj(t)+vi(t+h).The positive self feedback coefficient, sii = s > 0, controls the memory for past input valences for a preference state. Values of sii < 1 suggest decay in the memory or impact of previous valences over time, whereas values of sii > 1 suggest growth in impact over time (primacy effects). The negative lateral feedback coefficients, sij = sji < 0 for i not equal to j, produce competition among actions so that the strong inhibit the weak. In other words, as preference for one action grows stronger, then this moderates the preference for other actions. The magnitudes of the lateral inhibitory coefficients are assumed to be an increasing function of the similarity between choice options. These lateral inhibitory coefficients are important for explaining context effects on preference described later. Formally, this is a Markov process; matrix formulas have been mathematically derived for computing the choice probabilities and distribution of choice response times.[4]

The decision field theory can also be seen as a dynamic and stochastic random walk theory of decision making, presented as a model positioned between lower-level neural activation patterns and more complex notions of decision making found in psychology and economics.[4]

Explaining context effects

The DFT is capable of explaining context effects that many decision making theories are unable to explain.[17]

Many classic probabilistic models of choice satisfy two rational types of choice principles. One principle is called independence of irrelevant alternatives, and according to this principle, if the probability of choosing option X is greater than option Y when only X,Y are available, then option X should remain more likely to be chosen over Y even when a new option Z is added to the choice set. In other words, adding an option should not change the preference relation between the original pair of options. A second principle is called regularity, and according to this principle, the probability of choosing option X from a set containing only X and Y should be greater than or equal to the probability of choosing option X from a larger set containing options X, Y, and a new option Z. In other words, adding an option should only decrease the probability of choosing one of the original pair of options. However, empirical findings obtained by consumer researchers studying human choice behavior have found systematic context effects that systematically violate both of these principles.

The first context effect is the similarity effect. This effect occurs with the introduction of a third option S that is similar to X but it is not dominated by X. For example, suppose X is a BMW, Y is a Ford focus, and S is an Audi. The Audi is similar to the BMW because both are not very economical but they are both high quality and sporty. The Ford focus is different from the BMW and Audi because it is more economical but lower quality. Suppose in a binary choice, X is chosen more frequently than Y. Next suppose a new choice set is formed by adding an option S that is similar to X. If X is similar to S, and both are very different from Y, the people tend to view X and S as one group and Y as another option. Thus the probability of Y remains the same whether S is presented as an option or not. However, the probability of X will decrease by approximately half with the introduction of S. This causes the probability of choosing X to drop below Y when S is added to the choice set. This violates the independence of irrelevant alternatives property because in a binary choice, X is chosen more frequently than Y, but when S is added, then Y is chosen more frequently than X.

The second context effect is the compromise effect. This effect occurs when an option C is added that is a compromise between X and Y. For example, when choosing between C = Honda and X = BMW, the latter is less economical but higher quality. However, if another option Y = Ford Focus is added to the choice set, then C = Honda becomes a compromise between X = BMW and Y = Ford Focus. Suppose in a binary choice, X (BMW) is chosen more often than C (Honda). But when option Y (Ford Focus) is added to the choice set, then option C (Honda) becomes the compromise between X (BMW) and Y (Ford Focus), and C is then chosen more frequently than X. This is another violation of the independence of irrelevant alternatives property because X is chosen more often than C in a binary choice, but C when option Y is added to the choice set, then C is chosen more often than X.

The third effect is called the attraction effect. This effect occurs when the third option D is very similar to X but D is defective compared to X. For example D may be a new sporty car developed by a new manufacturer that is similar to option X = BMW, but costs more than the BMW. Therefore, there is little or no reason to choose D over X, and in this situation D is rarely ever chosen over X. However, adding D to a choice set boosts the probability of choosing X. In particular, the probability of choosing X from a set containing X,Y,D is larger than the probability of choosing X from a set containing only X and Y. The defective option D makes X shine, and this attraction effect violates the principle of regularity, which says that adding another option cannot increase the popularity of an option over the original subset.

DFT accounts for all three effects using the same principles and same parameters across all three findings. According to DFT, the attention switching mechanism is crucial for producing the similarity effect, but the lateral inhibitory connections are critical for explaining the compromise and attraction effects. If the attention switching process is eliminated, then the similarity effect disappears, and if the lateral connections are all set to zero, then the attraction and compromise effects disappear. This property of the theory entails an interesting prediction about the effects of time pressure on preferences. The contrast effects produced by lateral inhibition require time to build up, which implies that the attraction and compromise effects should become larger under prolonged deliberation (see Roe, Busemeyer & Townsend 2001). Alternatively, if context effects are produced by switching from a weighted average rule under binary choice to a quick heuristic strategy for the triadic choice, then these effects should get larger under time pressure. Empirical tests show that prolonging the decision process increases the effects[18][19] and time pressure decreases the effects.[20]

Neuroscience

The Decision Field Theory has demonstrated an ability to account for a wide range of findings from behavioral decision making for which the purely algebraic and deterministic models often used in economics and psychology cannot account. Recent studies that record neural activations in non-human primates during perceptual decision making tasks have revealed that neural firing rates closely mimic the accumulation of preference theorized by behaviorally-derived diffusion models of decision making.[8]

The decision processes of sensory-motor decisions are beginning to be fairly well understood both at the behavioral and neural levels. Typical findings indicate that neural activation regarding stimulus movement information is accumulated across time up to a threshold, and a behavioral response is made as soon as the activation in the recorded area exceeds the threshold.[21][22][23][24][25] A conclusion that one can draw is that the neural areas responsible for planning or carrying out certain actions are also responsible for deciding the action to carry out, a decidedly embodied notion.[8]

Mathematically, the spike activation pattern, as well as the choice and response time distributions, can be well described by what are known as diffusion models—especially in two-alternative forced choice tasks.[26] Diffusion models, such as the decision field theory, can be viewed as stochastic recurrent neural network models, except that the dynamics are approximated by linear systems. The linear approximation is important for maintaining a mathematically tractable analysis of systems perturbed by noisy inputs. In addition to these neuroscience applications, diffusion models (or their discrete time, random walk, analogues) have been used by cognitive scientists to model performance in a variety of tasks ranging from sensory detection,[13] and perceptual discrimination,[11][12][14] to memory recognition,[15] and categorization.[9][10] Thus, diffusion models provide the potential to form a theoretical bridge between neural models of sensory-motor tasks and behavioral models of complex-cognitive tasks.[8]

Notes

- Busemeyer, J. R., & Townsend, J. T. (1993) Decision Field Theory: A dynamic cognition approach to decision making. Psychological Review, 100, 432–459.

- Busemeyer, J. R., & Diederich, A. (2002). Survey of decision field theory. Mathematical Social Sciences, 43(3), 345-370.

- Busemeyer, J. R., & Johnson, J. G. (2004). Computational models of decision making. Blackwell handbook of judgment and decision making, 133-154.

- Busemeyer, J. R., & Johnson, J. G. (2008). Microprocess models of decision making. Cambridge handbook of computational psychology, 302-321.

- Oliveira, I.F.D.; Zehavi, S.; Davidov, O. (August 2018). "Stochastic transitivity: Axioms and models". Journal of Mathematical Psychology. 85: 25–35. doi:10.1016/j.jmp.2018.06.002. ISSN 0022-2496.

- Regenwetter, Michel; Dana, Jason; Davis-Stober, Clintin P. (2011). "Transitivity of preferences". Psychological Review. 118 (1): 42–56. doi:10.1037/a0021150. ISSN 1939-1471. PMID 21244185.

- Tversky, Amos (1969). "Intransitivity of preferences". Psychological Review. 76 (1): 31–48. doi:10.1037/h0026750. ISSN 0033-295X.

- Busemeyer, J. R.; Jessup, R. K.; Johnson, J. G.; Townsend, J. T. (2006). "Building bridges between neural models and complex decision making behaviour". Neural Networks. 19 (8): 1047–1058. doi:10.1016/j.neunet.2006.05.043. PMID 16979319.

- Ashby, F. G. (2000). "A stochastic version of general recognition theory". Journal of Mathematical Psychology. 44 (2): 310–329. doi:10.1006/jmps.1998.1249. PMID 10831374.

- Nosofsky, R. M.; Palmeri, T. J. (1997). "An exemplar-based random walk model of speeded classification". Psychological Review. 104 (2): 226–300. doi:10.1037/0033-295X.104.2.266. PMID 9127583.

- Laming, D. R. (1968). Information theory of choice-reaction times. New York: Academic Press. OCLC 425332.

- Link, S. W.; Heath, R. A. (1975). "A sequential theory of psychological discrimination". Psychometrika. 40: 77–111. doi:10.1007/BF02291481. S2CID 49042143.

- Smith, P. L. (1995). "Psychophysically principled models of visual simple reaction time". Psychological Review. 102 (3): 567–593. doi:10.1037/0033-295X.102.3.567.

- Usher, M.; McClelland, J. L. (2001). "The time course of perceptual choice: the leaky, competing accumulator model". Psychological Review. 108 (3): 550–592. doi:10.1037/0033-295X.108.3.550. PMID 11488378.

- Ratcliff, R. (1978). "A theory of memory retrieval". Psychological Review. 85 (2): 59–108. doi:10.1037/0033-295X.85.2.59.

- Diederich, A. (2003). "MDFT account of decision making under time pressure". Psychonomic Bulletin and Review. 10 (1): 157–166. doi:10.3758/BF03196480. PMID 12747503.

- Roe, R. M.; Busemeyer, J. R.; Townsend, J. T. (2001). "Multi-alternative decision field theory: A dynamic connectionist model of decision-making". Psychological Review. 108 (2): 370–392. doi:10.1037/0033-295X.108.2.370. PMID 11381834.

- Pettibone, J. C. (2012). "Testing the effect of time pressure on asymmetric dominance and compromise decoys in choice" (PDF). Judgment and Decision Making. 7 (4): 513–523.

- Simonson, I. (1989). "Choice based on reasons: The case of attraction and compromise effects". Journal of Consumer Research. 16 (2): 158–174. doi:10.1086/209205.

- Dhar, R.; Nowlis, S. M.; Sherman, S. J. (2000). "Trying hard or hardly trying: An analysis of context effects in choice". Journal of Consumer Psychology. 9 (4): 189–200. doi:10.1207/S15327663JCP0904_1.

- Schall, J. D. (2003). "Neural correlates of decision processes: neural and mental chronometry". Current Opinion in Neurobiology. 13 (2): 182–186. doi:10.1016/S0959-4388(03)00039-4. PMID 12744971. S2CID 2816799.

- Gold, J. I.; Shadlen, M. N. (2000). "Representation of a perceptual decision in developing oculomotor commands". Nature. 404 (6776): 390–394. Bibcode:2000Natur.404..390G. doi:10.1038/35006062. PMID 10746726. S2CID 4410921.

- Mazurek, M. E.; Roitman, J. D.; Ditterich, J.; Shadlen, M. N. (2003). "A role for neural integrators in perceptual decision making". Cerebral Cortex. 13 (11): 1257–1269. doi:10.1093/cercor/bhg097. PMID 14576217.

- Ratcliff, R.; Cherian, A.; Segraves, M. (2003). "A comparison of macaque behavior and superior colliculus neuronal activity to predictions from models of two-choice decisions". Journal of Neurophysiology. 90 (3): 1392–1407. doi:10.1152/jn.01049.2002. PMID 12761282.

- Shadlen, M. N.; Newsome, W. T. (2001). "Neural basis of a perceptual decision in the parietal cortex (area LIP) of the rhesus monkey". Journal of Neurophysiology. 86 (4): 1916–1936. doi:10.1152/jn.2001.86.4.1916. PMID 11600651.

- For a summary, see Smith, P. L.; Ratcliff, R. (2004). "Psychology and neurobiology of simple decisions". Trends in Neurosciences. 27 (3): 161–168. doi:10.1016/j.tins.2004.01.006. PMID 15036882. S2CID 6182265.

References

- Busemeyer, J. R.; Diederich, A. (2002). "Survey of decision field theory" (PDF). Mathematical Social Sciences. 43 (3): 345–370. doi:10.1016/S0165-4896(02)00016-1.

- Busemeyer, J. R.; Johnson, J. J. (2004). "Computational models of decision making" (PDF). In Koehler, D.; Harvey, N. (eds.). Handbook of Judgment and Decision Making. Blackwell. pp. 133–154.

- Busemeyer, J. R.; Johnson, J. J. (2008). "Micro-process models of decision-making" (PDF). In Sun, R. (ed.). Cambridge Handbook of Computational Cognitive Modeling. Cambridge University Press.