Loss of significance

Loss of significance is an undesirable effect in calculations using finite-precision arithmetic such as floating-point arithmetic. It occurs when an operation on two numbers increases relative error substantially more than it increases absolute error, for example in subtracting two nearly equal numbers (known as catastrophic cancellation). The effect is that the number of significant digits in the result is reduced unacceptably. Ways to avoid this effect are studied in numerical analysis.

Demonstration of the problem

The effect can be demonstrated with decimal numbers. The following example demonstrates loss of significance for a decimal floating-point data type with 10 significant digits:

Consider the decimal number

x = 0.1234567891234567890

A floating-point representation of this number on a machine that keeps 10 floating-point digits would be

y = 0.1234567891

which is fairly close when measuring the error as a percentage of the value. It is very different when measured in order of precision. The value 'x' is accurate to 10×10−19, while the value 'y' is only accurate to 10×10−10.

Now perform the calculation

x - y = 0.1234567891234567890 − 0.1234567890000000000

The answer, accurate to 20 significant digits, is

0.0000000001234567890

However, on the 10-digit floating-point machine, the calculation yields

0.1234567891 − 0.1234567890 = 0.0000000001

In both cases the result is accurate to same order of magnitude as the inputs (−20 and −10 respectively). In the second case, the answer seems to have one significant digit, which would amount to loss of significance. However, in computer floating-point arithmetic, all operations can be viewed as being performed on antilogarithms, for which the rules for significant figures indicate that the number of significant figures remains the same as the smallest number of significant figures in the mantissas. The way to indicate this and represent the answer to 10 significant figures is

1.000000000×10−10

Workarounds

It is possible to do computations using an exact fractional representation of rational numbers and keep all significant digits, but this is often prohibitively slower than floating-point arithmetic.

One of the most important parts of numerical analysis is to avoid or minimize loss of significance in calculations. If the underlying problem is well-posed, there should be a stable algorithm for solving it.

Sometimes clever algebra tricks can change an expression into a form that circumvents the problem. One such trick is to use the well-known equation

So with the expression , multiply numerator and denominator by giving

Now, can the expression be reduced to eliminate the subtraction? Sometimes it can.

For example, the expression can suffer loss of significant bits if is much smaller than 1. So rewrite the expression as

or

Loss of significant bits

Let x and y be positive normalized floating-point numbers.

In the subtraction x − y, r significant bits are lost where

for some positive integers p and q.

Instability of the quadratic equation

For example, consider the quadratic equation

with the two exact solutions:

This formula may not always produce an accurate result. For example, when is very small, loss of significance can occur in either of the root calculations, depending on the sign of .

The case , , will serve to illustrate the problem:

We have

In real arithmetic, the roots are

In 10-digit floating-point arithmetic:

Notice that the solution of greater magnitude is accurate to ten digits, but the first nonzero digit of the solution of lesser magnitude is wrong.

Because of the subtraction that occurs in the quadratic equation, it does not constitute a stable algorithm to calculate the two roots.

A better algorithm

A careful floating-point computer implementation combines several strategies to produce a robust result. Assuming that the discriminant b2 − 4ac is positive, and b is nonzero, the computation would be as follows:[1]

Here sgn denotes the sign function, where is 1 if is positive, and −1 if is negative. This avoids cancellation problems between and the square root of the discriminant by ensuring that only numbers of the same sign are added.

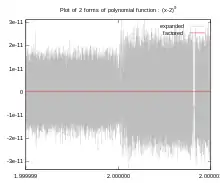

To illustrate the instability of the standard quadratic formula compared to this formula, consider a quadratic equation with roots and . To 16 significant digits, roughly corresponding to double-precision accuracy on a computer, the monic quadratic equation with these roots may be written as

Using the standard quadratic formula and maintaining 16 significant digits at each step, the standard quadratic formula yields

Note how cancellation has resulted in being computed to only 8 significant digits of accuracy.

The variant formula presented here, however, yields the following:

Note the retention of all significant digits for .

Note that while the above formulation avoids catastrophic cancellation between and , there remains a form of cancellation between the terms and of the discriminant, which can still lead to loss of up to half of correct significant digits.[2][3] The discriminant needs to be computed in arithmetic of twice the precision of the result to avoid this (e.g. quad precision if the final result is to be accurate to full double precision).[4] This can be in the form of a fused multiply-add operation.[2]

To illustrate this, consider the following quadratic equation, adapted from Kahan (2004):[2]

This equation has and roots

However, when computed using IEEE 754 double-precision arithmetic corresponding to 15 to 17 significant digits of accuracy, is rounded to 0.0, and the computed roots are

which are both false after the 8th significant digit. This is despite the fact that superficially, the problem seems to require only 11 significant digits of accuracy for its solution.

See also

References

- Press, William Henry; Flannery, Brian P.; Teukolsky, Saul A.; Vetterling, William T. (1992). "Section 5.6: Quadratic and Cubic Equations". Numerical Recipes in C (2 ed.).

- Kahan, William Morton (2004-11-20). "On the Cost of Floating-Point Computation Without Extra-Precise Arithmetic" (PDF). Retrieved 2012-12-25.

- Higham, Nicholas John (2002). Accuracy and Stability of Numerical Algorithms (2 ed.). SIAM. p. 10. ISBN 978-0-89871-521-7.

- Hough, David (March 1981). "Applications of the proposed IEEE 754 standard for floating point arithmetic". Computer. IEEE. 14 (3): 70–74. doi:10.1109/C-M.1981.220381.