High Bandwidth Memory

High Bandwidth Memory (HBM) is a high-speed computer memory interface for 3D-stacked SDRAM from Samsung, AMD and SK Hynix. It is used in conjunction with high-performance graphics accelerators, network devices and in some supercomputers. (Such as the NEC SX-Aurora TSUBASA and Fujitsu A64FX)[1] The first HBM memory chip was produced by SK Hynix in 2013,[2] and the first devices to use HBM were the AMD Fiji GPUs in 2015.[3][4]

High Bandwidth Memory has been adopted by JEDEC as an industry standard in October 2013.[5] The second generation, HBM2, was accepted by JEDEC in January 2016.[6]

Technology

HBM achieves higher bandwidth while using less power in a substantially smaller form factor than DDR4 or GDDR5.[7] This is achieved by stacking up to eight DRAM dies (thus being a Three-dimensional integrated circuit), including an optional base die (often a silicon interposer[8][9]) with a memory controller, which are interconnected by through-silicon vias (TSVs) and microbumps. The HBM technology is similar in principle but incompatible with the Hybrid Memory Cube interface developed by Micron Technology.[10]

HBM memory bus is very wide in comparison to other DRAM memories such as DDR4 or GDDR5. An HBM stack of four DRAM dies (4‑Hi) has two 128‑bit channels per die for a total of 8 channels and a width of 1024 bits in total. A graphics card/GPU with four 4‑Hi HBM stacks would therefore have a memory bus with a width of 4096 bits. In comparison, the bus width of GDDR memories is 32 bits, with 16 channels for a graphics card with a 512‑bit memory interface.[11] HBM supports up to 4 GB per package.

The larger number of connections to the memory, relative to DDR4 or GDDR5, required a new method of connecting the HBM memory to the GPU (or other processor).[12] AMD and Nvidia have both used purpose-built silicon chips, called interposers, to connect the memory and GPU. This interposer has the added advantage of requiring the memory and processor to be physically close, decreasing memory paths. However, as semiconductor device fabrication is significantly more expensive than printed circuit board manufacture, this adds cost to the final product.

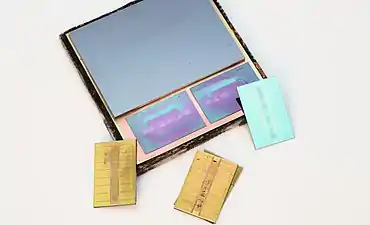

.jpg.webp) HBM DRAM die

HBM DRAM die.jpg.webp) HBM controller die

HBM controller die.jpg.webp) HBM memory on a AMD Radeon R9 Nano graphics card's GPU package

HBM memory on a AMD Radeon R9 Nano graphics card's GPU package

Interface

The HBM DRAM is tightly coupled to the host compute die with a distributed interface. The interface is divided into independent channels. The channels are completely independent of one another and are not necessarily synchronous to each other. The HBM DRAM uses a wide-interface architecture to achieve high-speed, low-power operation. The HBM DRAM uses a 500 MHz differential clock CK_t / CK_c (where the suffix "_t" denotes the "true", or "positive", component of the differential pair, and "_c" stands for the "complementary" one). Commands are registered at the rising edge of CK_t, CK_c. Each channel interface maintains a 128‑bit data bus operating at double data rate (DDR). HBM supports transfer rates of 1 GT/s per pin (transferring 1 bit), yielding an overall package bandwidth of 128 GB/s.[13]

HBM2

The second generation of High Bandwidth Memory, HBM2, also specifies up to eight dies per stack and doubles pin transfer rates up to 2 GT/s. Retaining 1024‑bit wide access, HBM2 is able to reach 256 GB/s memory bandwidth per package. The HBM2 spec allows up to 8 GB per package. HBM2 is predicted to be especially useful for performance-sensitive consumer applications such as virtual reality.[14]

On January 19, 2016, Samsung announced early mass production of HBM2, at up to 8 GB per stack.[15][16] SK Hynix also announced availability of 4 GB stacks in August 2016.[17]

HBM2 DRAM die

HBM2 DRAM die HBM2 controller die

HBM2 controller die The HBM2 interposer of a Radeon RX Vega 64 GPU, with removed HBM dies; the GPU is still in place

The HBM2 interposer of a Radeon RX Vega 64 GPU, with removed HBM dies; the GPU is still in place

HBM2E

In late 2018, JEDEC announced an update to the HBM2 specification, providing for increased bandwidth and capacities.[18] Up to 307 GB/s per stack (2.5 Tbit/s effective data rate) is now supported in the official specification, though products operating at this speed had already been available. Additionally, the update added support for 12‑Hi stacks (12 dies) making capacities of up to 24 GB per stack possible.

On March 20, 2019, Samsung announced their Flashbolt HBM2E, featuring eight dies per stack, a transfer rate of 3.2 GT/s, providing a total of 16 GB and 410 GB/s per stack.[19]

August 12, 2019, SK Hynix announced their HBM2E, featuring eight dies per stack, a transfer rate of 3.6 GT/s, providing a total of 16 GB and 460 GB/s per stack.[20][21] On 2 July 2020, SK Hynix announced that mass production has begun.[22]

HBMnext

In late 2020, Micron unveiled that the HBM2E standard would be updated and alongside that they unveiled the next standard known as HBMnext. Originally proposed as HBM3, this is a big generational leap from HBM2 and the replacement to HBM2E. This new VRAM will come to the market in the Q4 of 2022. This will likely introduce a new architecture as the naming suggests.

While the architecture might be overhauled, leaks point toward the performance to be similar to that of the updated HBM2E standard. This RAM is likely to be used mostly in data center GPUs.[23][24][25][26]

History

Background

Die-stacked memory was initially commercialized in the flash memory industry. Toshiba introduced a NAND flash memory chip with eight stacked dies in April 2007,[27] followed by Hynix Semiconductor introducing a NAND flash chip with 24 stacked dies in September 2007.[28]

3D-stacked random-access memory (RAM) using through-silicon via (TSV) technology was commercialized by Elpida Memory, which developed the first 8 GB DRAM chip (stacked with four DDR3 SDRAM dies) in September 2009, and released it in June 2011. In 2011, SK Hynix introduced 16 GB DDR3 memory (40 nm class) using TSV technology,[2] Samsung Electronics introduced 3D-stacked 32 GB DDR3 (30 nm class) based on TSV in September, and then Samsung and Micron Technology announced TSV-based Hybrid Memory Cube (HMC) technology in October.[29]

Development

The development of High Bandwidth Memory began at AMD in 2008 to solve the problem of ever-increasing power usage and form factor of computer memory. Over the next several years, AMD developed procedures to solve die-stacking problems with a team led by Senior AMD Fellow Bryan Black.[30] To help AMD realize their vision of HBM, they enlisted partners from the memory industry, particularly Korean company SK Hynix,[30] which had prior experience with 3D-stacked memory,[2][28] as well as partners from the interposer industry (Taiwanese company UMC) and packaging industry (Amkor Technology and ASE).[30]

The development of HBM was completed in 2013, when SK Hynix built the first HBM memory chip.[2] HBM was adopted as industry standard JESD235 by JEDEC in October 2013, following a proposal by AMD and SK Hynix in 2010.[5] High volume manufacturing began at a Hynix facility in Icheon, South Korea, in 2015.

The first GPU utilizing HBM was the AMD Fiji which was released in June 2015 powering the AMD Radeon R9 Fury X.[3][31][32]

In January 2016, Samsung Electronics began early mass production of HBM2.[15][16] The same month, HBM2 was accepted by JEDEC as standard JESD235a.[6] The first GPU chip utilizing HBM2 is the Nvidia Tesla P100 which was officially announced in April 2016.[33][34]

Future

At Hot Chips in August 2016, both Samsung and Hynix announced the next generation HBM memory technologies.[35][36] Both companies announced high performance products expected to have increased density, increased bandwidth, and lower power consumption. Samsung also announced a lower-cost version of HBM under development targeting mass markets. Removing the buffer die and decreasing the number of TSVs lowers cost, though at the expense of a decreased overall bandwidth (200 GB/s).

See also

- Stacked DRAM

- eDRAM

- Chip stack multi-chip module

- Hybrid Memory Cube: stacked memory standard from Micron Technology (2011)

References

- ISSCC 2014 Trends Archived 2015-02-06 at the Wayback Machine page 118 "High-Bandwidth DRAM"

- "History: 2010s". SK Hynix. Retrieved 8 July 2019.

- Smith, Ryan (2 July 2015). "The AMD Radeon R9 Fury X Review". Anandtech. Retrieved 1 August 2016.

- Morgan, Timothy Prickett (March 25, 2014). "Future Nvidia 'Pascal' GPUs Pack 3D Memory, Homegrown Interconnect". EnterpriseTech. Retrieved 26 August 2014.

Nvidia will be adopting the High Bandwidth Memory (HBM) variant of stacked DRAM that was developed by AMD and Hynix

- High Bandwidth Memory (HBM) DRAM (JESD235), JEDEC, October 2013

- "JESD235a: High Bandwidth Memory 2". 2016-01-12.

- HBM: Memory Solution for Bandwidth-Hungry Processors Archived 2015-04-24 at the Wayback Machine, Joonyoung Kim and Younsu Kim, SK Hynix // Hot Chips 26, August 2014

- https://semiengineering.com/whats-next-for-high-bandwidth-memory/

- https://semiengineering.com/knowledge_centers/packaging/advanced-packaging/2-5d-ic/interposers/

- Where Are DRAM Interfaces Headed? Archived 2018-06-15 at the Wayback Machine // EETimes, 4/18/2014 "The Hybrid Memory Cube (HMC) and a competing technology called High-Bandwidth Memory (HBM) are aimed at computing and networking applications. These approaches stack multiple DRAM chips atop a logic chip."

- Highlights of the HighBandwidth Memory (HBM) Standard. Mike O’Connor, Sr. Research Scientist, NVidia // The Memory Forum – June 14, 2014

- Smith, Ryan (19 May 2015). "AMD Dives Deep On High Bandwidth Memory – What Will HBM Bring to AMD?". Anandtech. Retrieved 12 May 2017.

- "High-Bandwidth Memory (HBM)" (PDF). AMD. 2015-01-01. Retrieved 2016-08-10.

- Valich, Theo (2015-11-16). "NVIDIA Unveils Pascal GPU: 16GB of memory, 1TB/s Bandwidth". VR World. Retrieved 2016-01-24.

- "Samsung Begins Mass Producing World's Fastest DRAM – Based on Newest High Bandwidth Memory (HBM) Interface". news.samsung.com.

- "Samsung announces mass production of next-generation HBM2 memory – ExtremeTech". 19 January 2016.

- Shilov, Anton (1 August 2016). "SK Hynix Adds HBM2 to Catalog". Anandtech. Retrieved 1 August 2016.

- "JEDEC Updates Groundbreaking High Bandwidth Memory (HBM) Standard" (Press release). JEDEC. 2018-12-17. Retrieved 2018-12-18.

- "Samsung Electronics Introduces New High Bandwidth Memory Technology Tailored to Data Centers, Graphic Applications, and AI | Samsung Semiconductor Global Website". www.samsung.com. Retrieved 2019-08-22.

- "SK Hynix Develops World's Fastest High Bandwidth Memory, HBM2E". www.skhynix.com. August 12, 2019. Retrieved 2019-08-22.

- "SK Hynix Announces its HBM2E Memory Products, 460 GB/S and 16GB per Stack".

- "SK hynix Starts Mass-Production of High-Speed DRAM, "HBM2E"". 2 July 2020.

- https://videocardz.com/newz/micron-reveals-hbmnext-successor-to-hbm2e

- https://amp.hothardware.com/news/micron-announces-hbmnext-as-eventual-replacement-for-hbm2e

- https://www.extremetech.com/computing/313829-micron-introduces-hbmnext-gddr6x-confirms-rtx-3090

- https://www.tweaktown.com/news/74503/micron-unveils-hbmnext-the-successor-to-hbm2e-for-next-gen-gpus/amp.html

- "TOSHIBA COMMERCIALIZES INDUSTRY'S HIGHEST CAPACITY EMBEDDED NAND FLASH MEMORY FOR MOBILE CONSUMER PRODUCTS". Toshiba. April 17, 2007. Archived from the original on November 23, 2010. Retrieved 23 November 2010.

- "Hynix Surprises NAND Chip Industry". Korea Times. 5 September 2007. Retrieved 8 July 2019.

- Kada, Morihiro (2015). "Research and Development History of Three-Dimensional Integration Technology". Three-Dimensional Integration of Semiconductors: Processing, Materials, and Applications. Springer. pp. 15–8. ISBN 9783319186757.

- High-Bandwidth Memory (HBM) from AMD: Making Beautiful Memory, AMD

- Smith, Ryan (19 May 2015). "AMD HBM Deep Dive". Anandtech. Retrieved 1 August 2016.

- AMD Ushers in a New Era of PC Gaming including World’s First Graphics Family with Revolutionary HBM Technology

- Smith, Ryan (5 April 2016). "Nvidia announces Tesla P100 Accelerator". Anandtech. Retrieved 1 August 2016.

- "NVIDIA Tesla P100: The Most Advanced Data Center GPU Ever Built". www.nvidia.com.

- Smith, Ryan (23 August 2016). "Hot Chips 2016: Memory Vendors Discuss Ideas for Future Memory Tech – DDR5, Cheap HBM & More". Anandtech. Retrieved 23 August 2016.

- Walton, Mark (23 August 2016). "HBM3: Cheaper, up to 64GB on-package, and terabytes-per-second bandwidth". Ars Technica. Retrieved 23 August 2016.

External links

- High Bandwidth Memory (HBM) DRAM (JESD235), JEDEC, October 2013

- Lee, Dong Uk; Kim, Kyung Whan; Kim, Kwan Weon; Kim, Hongjung; Kim, Ju Young; et al. (9–13 Feb 2014). "A 1.2V 8Gb 8‑channel 128GB/s high-bandwidth memory (HBM) stacked DRAM with effective microbump I/O test methods using 29nm process and TSV". 2014 IEEE International Solid-State Circuits Conference – Digest of Technical Papers. IEEE (published 6 March 2014): 432–433. doi:10.1109/ISSCC.2014.6757501. S2CID 40185587.

- HBM vs HBM2 vs GDDR5 vs GDDR5X Memory Comparison